For the past few months, I have been using MVU as the main pattern for my TUI and GUI applications. I realized that its simplicity and separation of concerns make a more easy, modular and maintainable system for most of my projects that require a form of rendering than the alternatives.

What is it?

MVU (Model-View-Update) was introduced in Elm and has since then been used in frameworks like iced.

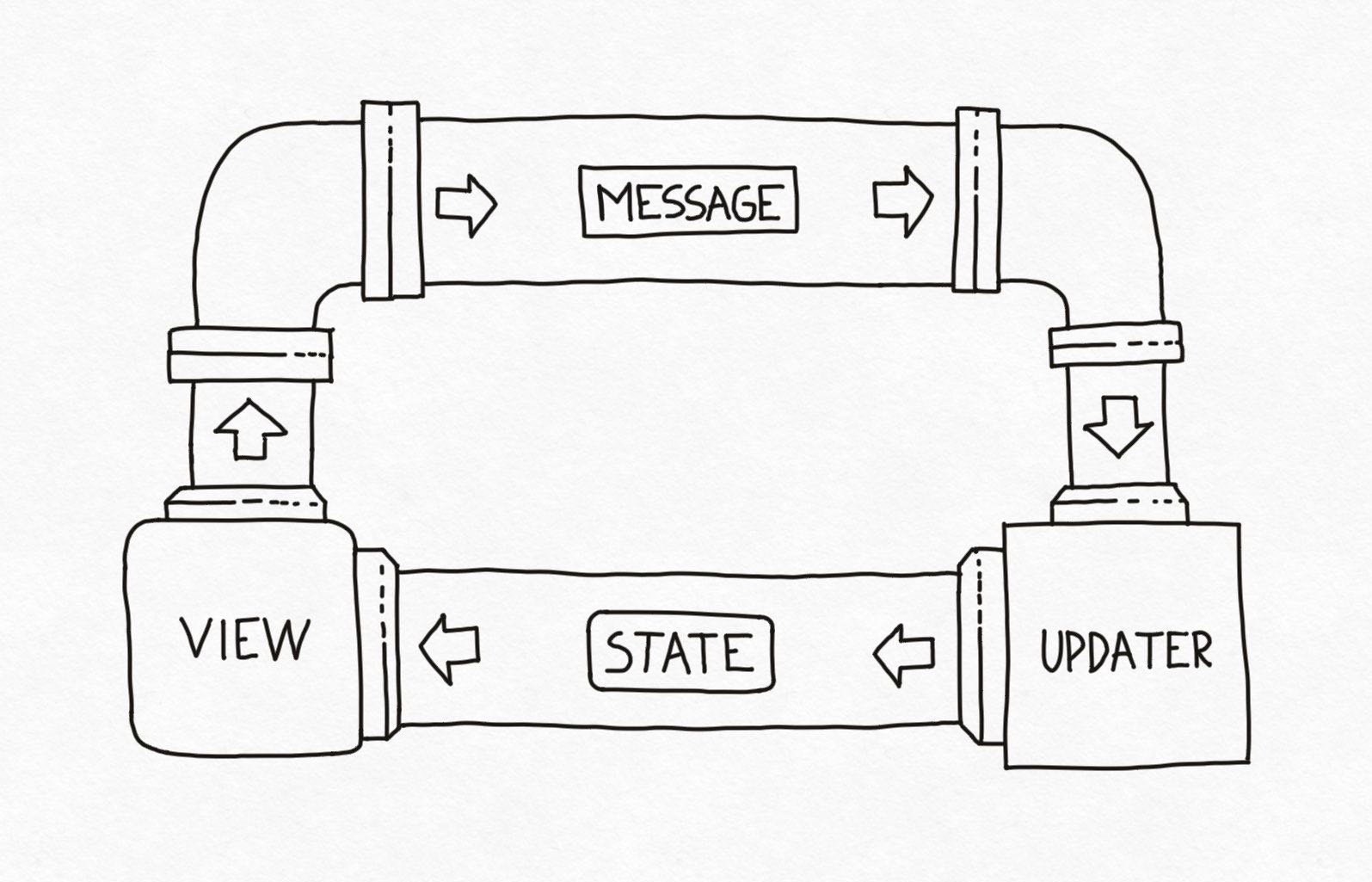

The model is the state of the application, the view is the presentation layer for that state and the update is the only entity able to change it. The lifecycle loop starts with the initial state of the model. The view renders the state and collects user actions. The update takes these actions in the form of messages and handles the necessary modifications to the state. Then it loops back.

This is it! A true separation of concerns under a simple lifecycle loop.

Important notes

- State is not mutated by the view, ever! Embrace its immutability.

- State represents the whole application and nothing is stored on the view.

- View doesn’t care about logic, just knows how to represent a state.

How?

Let me illustrate the concept with a simple counter.

enum Message {

CounterIncrease,

CounterDecrease,

}

struct State {

pub counter: usize

}

fn main() {

// setup the initial state

let mut state = State { counter: 0 };

// loop of the application

loop {

// render the current state

let new_msg: Option<Message> = draw(&state);

// in this case, lets update only upon action but

// nothing prevents you to update at each iteration

if let Some(new_msg) = new_msg {

state = update(state, new_msg);

}

}

}

How to scale?

I know what you are thinking. A simple counter is not a real-world scenario. So where to go from here? You could split the application into modules of and setup chains of messages.

enum Message {

// globals

ShouldExit,

ChangeScreen(Screen),

// modules

User(MessageUser),

Organization(MessageOrganization),

Content(MessageContent),

}

struct State {

pub screen: Screen,

pub is_running: bool,

pub user: StateUser,

pub organization: StateOrganization,

pub content: StateContent,

}

fn main() {

let mut state = State {

screen: Screen::Start,

is_running: true,

user: StateUser::default(),

organization: StateOrganization::default(),

content: StateContent::default(),

};

loop {

let new_msgs: Option<Vec<Message>> = draw(&state);

if let Some(new_msgs) = new_msgs {

state = update(state, new_msgs);

}

// close the application

if !state.is_running {

break;

}

}

}

fn update(mut state: State, new_msgs: Vec<Message>) -> State {

for msg in new_msgs {

// check globals

match msg {

Message::ShouldExit => {

state.is_running = false;

return state;

},

Message::ChangeScreen(screen) => state.screen = screen,

_ => {}

}

// send to the modules

state.user.update(&state, msg);

state.organization.update(&state, msg);

if state.screen == Screen::Content {

state.content.update(&state, msg);

}

}

state

}

Use different threads

There is no rule saying that the view and the updater must run on the same lifecycle timing. These processes can run concurrently, on different threads without stepping on each other, without lifetime problems or dead locks.

use tokio::sync::{mpsc, watch};

use tokio::time::{self, Duration, MissedTickBehavior};

enum Message {

CounterIncrease,

CounterDecrease,

}

#[derive(Clone)]

struct State {

pub counter: usize,

}

#[tokio::main]

async fn main() -> anyhow::Result<()> {

// setup channels to pass the messages and state around

let (message_tx, mut message_rx) = mpsc::channel::<Vec<Message>>(1000);

let (state_change_tx, mut state_change_rx) = watch::channel::<Option<State>>(None);

// handle the renderer

let _draw_handle = tokio::spawn(async move {

let mut frame_timer = time::interval(Duration::from_millis(1000 / 60));

loop {

// maybe we don't want to render every time a message comes

// but on an interval

let _ = frame_timer.tick().await;

let state = state_change_rx.borrow_and_update();

let new_messages: Vec<Message> = draw(state).await?;

message_tx.send(new_messages).await?;

}

});

// handle the updater

let _upd_handle = tokio::spawn(async move {

let mut curr_state = State { counter: 0 };

// send an initial state for the renderer

state_change_tx.send(Some(curr_state.clone()))?;

loop {

// maybe we just want to update when a message comes in

let first_msg = message_rx.recv().await?;

// drain all the subsequent messages incoming

let mut messages = first_msg;

while let Ok(curr_msg) = message_rx.try_recv() {

messages.extend(curr_msg);

}

// handle the update of all messages incoming

let curr_state = update(messages, curr_state)?;

state_change_tx.send(Some(curr_state.clone()))?;

}

});

loop {

// infinite loop so app doesn't close

}

Ok(())

}

Why?

In a myriad of good options and well-proven ones, why should you care about learning and using a new architecture? As with anything, every architecture has its benefits and trade-offs. Some architectures make it easy to shoot yourself in the foot, while others become cumbersome to maintain and build on. You pick your poison. MVU is not without its faults. There is some overhead in communication, and it is not as standard or widespread as other approaches, but I believe that the benefits are significant.

You can test the state or the view in isolation. No need to worry about logic on the view or rendering and representation on the state. This can be quite valuable when you are debugging, setting automated tests or spreading the tasks through your team. Nothing stops you from having both a web interface and a TUI on the view side while sharing the same state. You could even use, let’s say, Rust for the state updater and plain JavaScript, for the view.

In the end, this model makes systems easier to maintain and reason about by simplifying the process without sacrificing flexibility or modularity. Definitely sticking to this one for the time being.